Which makes them both a goldmine of customer insight and a complex, painfully difficult task to tag and categorize. It was originally written by the following contributors.Customer support conversations are rich, lengthy, and come in high volumes. New incoming content will be appropriately tagged by running NLP inference. As users search for content by keywords, this multi-class classification process enriches untagged content with labels that will allow you to search on substantial portions of text, which improves the information retrieval processes. Potential use casesīy using natural language processing (NLP) with deep learning for content tagging, you enable a scalable solution to create tags across content.

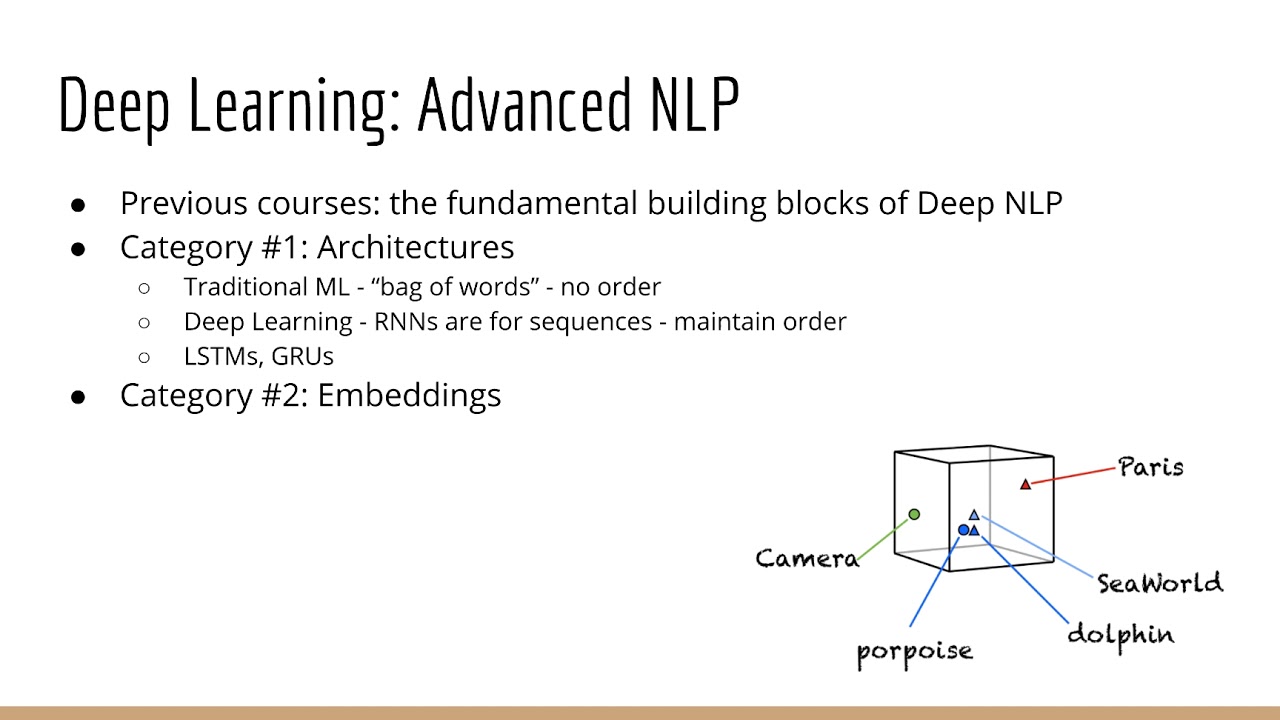

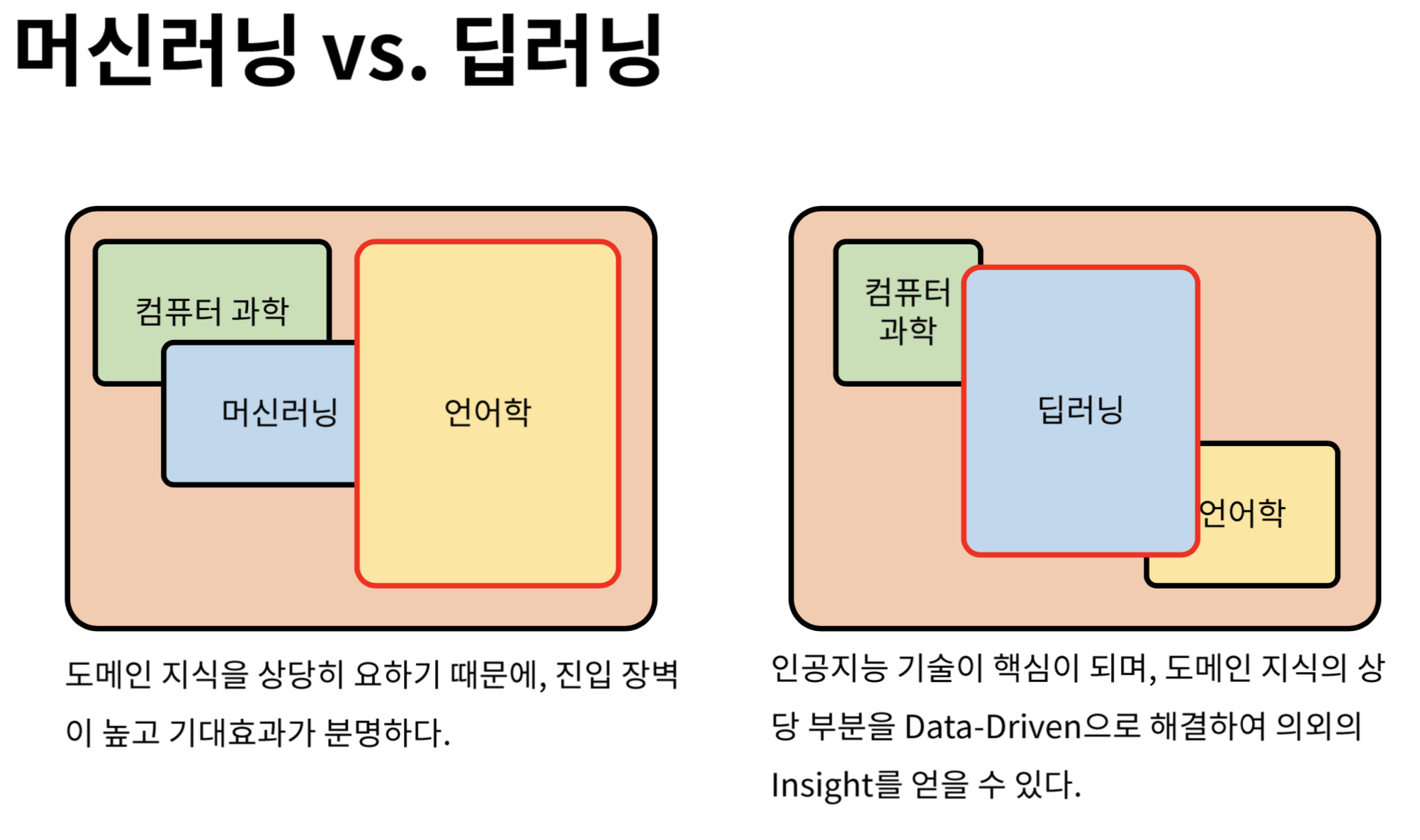

Mislabeled content is difficult or impossible to find when it's needed later. Because users don't have lists of commonly searched terms or a deep understanding of the site structure, they frequently mislabel content. Often, however, content tagging is left to users' discretion. Social sites, forums, and other text-heavy Q&A services rely heavily on content tagging, which enables good indexing and user search. Data Lake Storage for Big Data Analytics.The model would run as batch inference and store the results in the respective datastore. Model results can be written to a storage option in file or tabular format, then properly indexed by Azure Cognitive Search. The model can be deployed through Azure Kubernetes Service as real-time endpoints. Endpoints can be made available to a front-end application. The model can be deployed through Azure Kubernetes Service, while running a Kubernetes-managed cluster where the containers are deployed from images that are stored in Azure Container Registry. Microsoft recommends using ethical AI tools to detect biased language, which ensures the fair training of a language model. Furthermore, text can be post-processed with specific business rules that are deterministically defined, based on the business goals. NLP post-processing recommends storing the model in a model register in Azure ML, to track model metrics. Once the text is broken up into sentences, NLP techniques, such as lemmatization or stemming, allow the language to be tokenized in a general form.Īs NLP models are already available pre-trained, the transfer learning approach recommends that you download language-specific embeddings and use an industry standard model, for multi-class text classification, such as variations of BERT. NLP pre-processing includes several steps to consume data, with the purpose of text generalization. Data can be stored as files within Azure Data Lake Storage or in tabular form in Azure Synapse or Azure SQL Database.Īzure Machine Learning (ML) can connect and read from such sources, to ingest the data into the NLP pipeline for pre-processing, model training, and post-processing. Dataflowĭata is stored in various formats, depending on its original source. Architectureĭownload a Visio file of this architecture. This article describes how you can use Microsoft AI to improve website content tagging accuracy by combining deep learning and natural language processing (NLP) with data on site-specific search terms. If you'd like us to expand the content with more information, such as potential use cases, alternative services, implementation considerations, or pricing guidance, let us know by providing GitHub feedback.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed